For people experiencing paralysis, communication often means spelling out words one letter at a time by using their eyes to select letters on a keyboard, according to Daniel Rubin, a critical care neurologist at Massachusetts General Hospital and an assistant professor of neurology at Harvard Medical School.

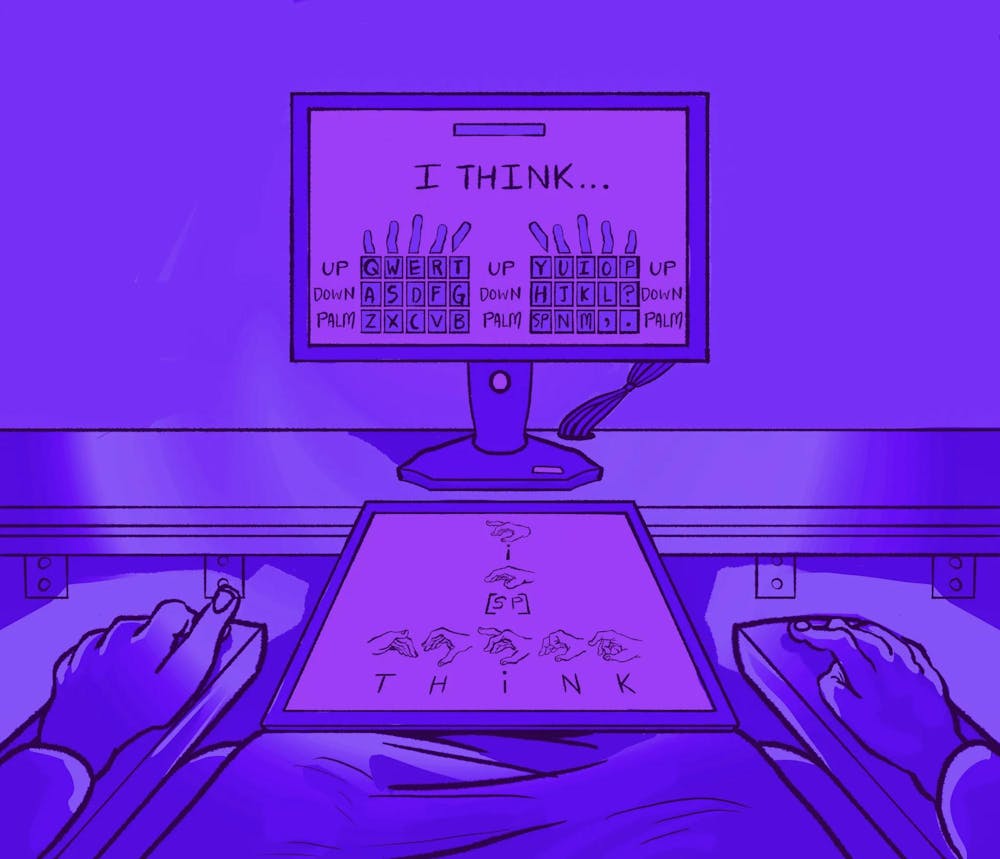

This process can be “excruciatingly slow and very frustrating,” Rubin said in an interview with The Herald. But technology pioneered in a Brown-affiliated study could make this process more efficient — patients would only need to mentally attempt to type on a QWERTY keyboard.

Rubin is a principal investigator on the Nature Neuroscience paper, which details how an intracortical brain-computer interface neuroprosthesis — or an iBCI — can decode attempted finger movements using signals in the brain. With this technology, individuals could type 22 words per minute— a faster rate than previous eye-gaze-tracking technologies have enabled, according to Rubin.

“For people who have paralysis, it’s not just getting your message out there,” Rubin said. “It’s being part of the conversation in as close to real time as possible.”

The research — which is a part of the larger body of work by BrainGate, a leading computational neuroscience research team across multiple universities — is being conducted at Brown, Stanford University, Emory University and the University of California, Davis. Currently, nine patients are enrolled.

Even though patients that are paralyzed cannot control motor activity, their brains still generate the neural activity necessary for those movements. Those signals can be used to generate decoded communications, according to Brown and Harvard postdoctoral research fellow Justin Jude, the first author on the paper.

Doctors surgically place tiny electrode arrays in the cortexes of patients’ brains to track changes in the neurons’ signaling pathways via changes in electricity. Once the arrays — which are about half the size of an American penny — are in the brain, researchers can record the signals associated with attempted finger movements, Jude said.

The signals are decoded into letters, and the letters are then “stitched” together into sentences using a recurrent neural network, or an RNN. According to the paper, the RNN — a deep learning algorithm — infers the letters in sequence as the user performs a series of attempted finger movements.

To test and train the technology, the researchers had patients use the iBCI to type prompted sentences. Then, they observed if the decoder’s output matched the patient’s input, according to Rubin. The algorithm also was configured to autocorrect errors, Jude said.

This work has immense impact, according to Rubin. For example, one paralyzed patient was able to return to work because of the technology’s impact on his communication abilities.

This research is similar to related efforts to decode attempted speech, according to Jude. This past week, the researchers published another paper that used the same decoding methods as the typing study, but instead of decoding attempted finger movements, the researchers recorded neural activity to decode attempted mouth and tongue movements to make phonemes — or phonetic units.

Research is aiming to move “from encoding movement of the hand and arm to decoding the movement of the muscles of articulation — the muscles that we use to talk, the face, mouth, jaw, tongue — and in doing so, use the same sort of decoding approach, but predict what sounds are someone trying to make as they think about talking,” Rubin said.

“Our amazing clinical trial participants really deserve all the credit,” Professor of Engineering and of Brain Science Leigh Hochberg ’90 wrote in an email to The Herald. “They are participating not because they hope to gain any personal benefit, but because they want to help us to develop and test novel neurotechnologies that will help other people with paralysis.”

Elizabeth Rosenbaum is a senior staff writer covering science and research.