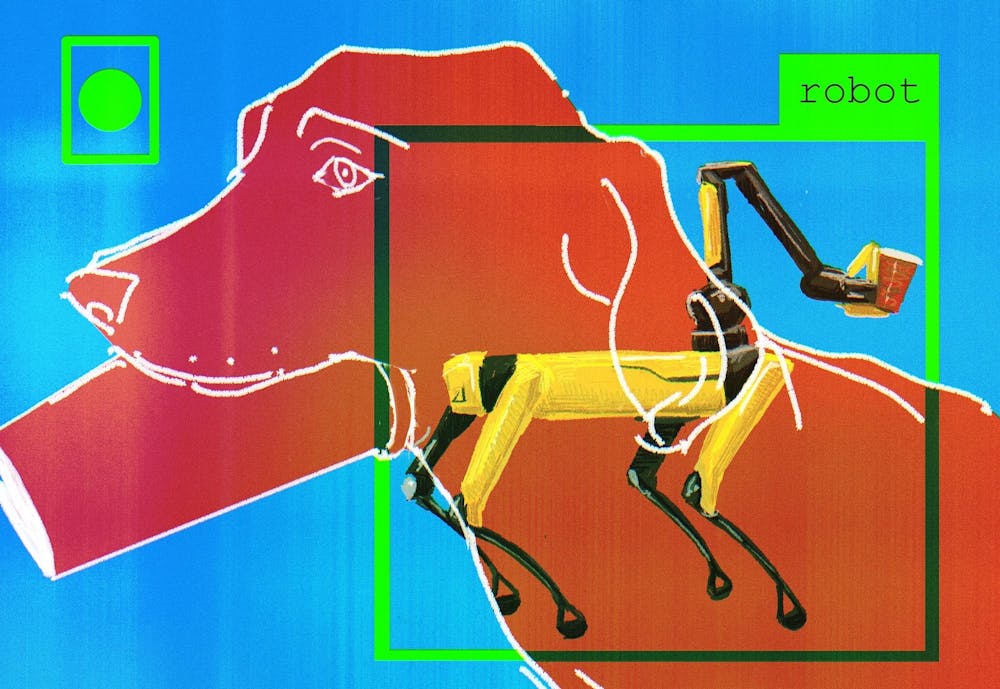

Robots are increasingly present in homes and healthcare facilities to assist humans in daily tasks, but communication with them can be unreliable. Brown researchers have developed a new approach to help robots — such as Boston Dynamics’s robotic dog, “Spot” — more accurately identify and retrieve items for humans. The approach is inspired by how humans communicate with dogs.

Led by computer science doctoral candidate Ivy He GS, the study investigated how robots might be able to interpret human gestures in the same way as dogs. Researchers used a modular strategy to help the robots figure out how to locate and retrieve target objects using inputs from both human language and gestures.

According to Professor of Computer Science and Associate Professor of Engineering Stefanie Tellex, the principal investigator on the study, the researchers were “inspired by how humans and dogs collaborate, for example on agility tasks or search tasks,” she wrote in an email to The Herald.

“Dogs are able to follow human pointing gestures, and we wanted our robots to do it too,” Tellex wrote in an email to The Herald.

The team was inspired by the Brown Dog Lab, run by Associate Professor of Cognitive and Psychological Sciences Daphna Buchsbaum ’02, which has investigated how dogs interpret human gestures like pointing, according to He.

Building on Buchsbaum’s research, He and Madeline Pelgrim GS — a doctoral candidate in the Department of Cognitive and Psychological Sciences — developed a separate study to understand the cognitive science behind when humans communicate with a pointing gesture, which creates a vector directing the dogs to an object.

In that previous study, He and Pelgrim used depth tracking and skeleton tracking to understand the body language cues people naturally used, such as which arm they preferred or whether they tended to point across their body, Pelgrim told The Herald.

He drew on this study to train robots how to recognize gestures. “We found that when pointing towards an object close by, we most likely use our finger,” He said. “But when we point towards objects that are further away, we use (our eyes) to align the gaze direction.”

Using this information about how humans point to dogs, researchers programmed robots to recognize those same cues and fetch the desired item. The researchers used a multimodal framework — where different cues like language, eyeline and pointing gesture can be represented mathematically to the robot, He said. According to Stefanie, this work enabled the robots to search for “an open-ended object based on arbitrary language and gesture,” while “previous approaches relied on a pre-defined set of objects” to pick from.

Unlike the controlled environments of factories or warehouses, everyday communication in homes is filled with ambiguity that current robotic systems aren’t built for. But using this multimodal instruction — integrating language, gesture and visual perception — helps improve robots’ ability to work in real-life conditions.

“I think this is the preliminary work that shows the feasibility of (having) a robot that can infer (from) ambiguous and vague human instructions,” He added.

“What we found is that with the multimodal instruction, a robot is able to search more efficiently and is able to achieve a success rate around 88%,” He said.

While the results of her study are promising, He said there is more research to be done before the mechanism can be deployed in certain real-world settings. For example, cluttered environments cause more problems for the robots.

Pelgrim said the study represents a long-awaited milestone for the field, and the joining of computer science with cognitive and psychological science research has been a particular highlight of the project.

“People have been writing about wanting to use dog-human interaction as an inspiration for dog-robot interactions since the mid 2010s, if not earlier, and this is now actually getting out there as real empirical work that’s been done,” Pelgrim said.