Even before most students had returned to campus following winter break, Metcalf Auditorium filled last Thursday and Friday as students and faculty attended “Beyond Deep Learning,” a workshop designed to showcase interdisciplinary perspectives on artificial intelligence and its future.

Artificial intelligence is a means of enabling computers “to solve tasks in ways that usually resemble human intelligence,” said Michael Frank, professor of cognitive, linguistic and psychological sciences. Deep learning, a subfield of AI, is inspired by the biology of neurons in the human brain and designed to solve various problems, Frank said. These networks are used in technology that is becoming increasingly common. For example, Google Translate implements deep neural networks to translate between languages, while Tesla cars use the technology to detect pedestrians and signs in the road, he added.

Stephanie Jones, associate professor of neuroscience, said deep learning can also be used for health applications such as categorizing data sets to determine if a patient has cancer.

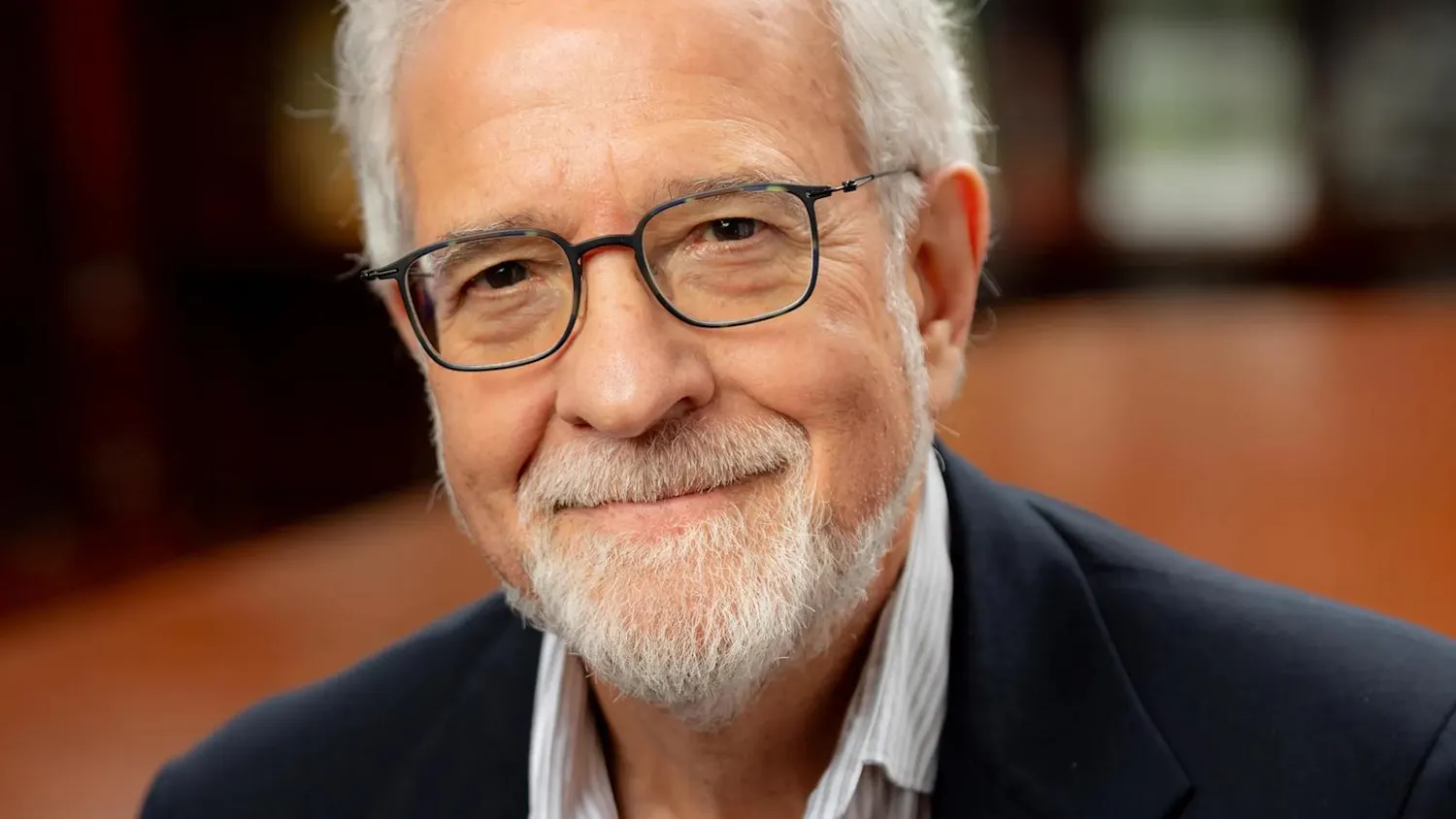

While deep learning has led to a number of breakthroughs in recent years, the technique is nowhere near the level of intelligence of the human brain, said Thomas Serre, associate professor of cognitive, linguistic and psychological sciences. The workshop was organized by Serre along with members of his lab and brought together researchers from computer science, engineering and cognitive science to work toward the next generation of AI. Attendees hoped to gain insight from biological mechanisms to create better algorithms. The team decided to organize the event based on their work, which seeks to “reverse-engineer the visual system” using knowledge pulled from biology, he explained.

The workshop featured a series of keynote presentations from University faculty as well as other leading scientists in the field. After the talks, participants from different departments broke into small groups to debate the topics raised. These discussions, along with the public presentations, addressed both the successes and limitations of deep learning, Frank said, adding that hearing world experts present their research showed why it may be necessary to take new approaches to AI. The workshop was a chance to “start the conversation about how we might integrate our efforts to make machines better and also learn something about the human brain,” Jones said.

“Beyond Deep Learning” was organized as a culmination to Serre’s Wintersession class, CLPS 1950: “Deep Learning in Brains, Minds and Machines.” On the last two days of the course, undergraduate students were exposed to experts working on different components of AI and gained the tools to “assess where the state of the art lies … (and determine AI’s) strengths and weaknesses,” Serre said. While progress in AI is significant and substantial, it is often “overhyped” in the media, especially as the topic has been the focus of many startups, he added. Instead, Serre intended to expose his students to the reality of the field through first-hand experience. Serre’s undergraduate students were engaged, asking more than half of the questions during the question-and-answer sessions following the lectures, he said.

The conference will pick up again in April with four new speakers to continue the discussions started last week.